NeoPsyke started from a simple premise: a deterministic orchestration program built around an architecture inspired by Freud's structural model might be enough to create an internally motivated control loop, using existing LLMs to simulate distinct cognitive roles. Not to create consciousness, and not to claim AGI, but to test whether a useful autonomous agent can be organized around distinct internal functions for motivation, planning, and governance.

The architecture

Many AI agents are still mostly reactive. They wait for a prompt, call some tools, and return a result. More recent systems have started introducing proactive behavior through scheduled checks, recurring triggers, or background routines. That is a real step toward autonomy, but it usually means the runtime is told when to wake up and what kind of thing to monitor. NeoPsyke also supports explicit scheduled work, but it treats that as the job of durable goals: tasks that genuinely need reliability, timing, or frequency. Its autonomy model tries to make proactivity emerge from internal motivational pressure and generic trigger paths, so the system can decide for itself when something deserves attention and what kind of task to pursue.

That runtime is organized around three modules inspired by Freud's structural model, the Id, Ego, and Superego, wired into a continuous loop where motivation, planning, and governance are separate, explicit, and always running.

The choice of Freud is not a claim that psychoanalysis is scientifically complete or literally true. It is used as an operational decomposition: a familiar and compact way to describe three different jobs inside an agent:

- generate motivation: the Id

- mediate between motivation and reality: the Ego

- enforce governance and self-control: the Superego

Freud's model is used here not just as a metaphor, but as a framework for splitting the agent into parts with sharply different responsibilities and explicit interfaces between them.

The main argument

The broad architectural argument is straightforward: an autonomous LLM-based agent can be organized around three distinct functions.

- An internal motivation module that generates impulses independently of user input.

- A planning module that develops those impulses into candidate actions.

- A governance module that approves or denies those actions.

The more interesting idea is:

If an internally generated impulse ultimately leads to a successful action, the originating drive can discharge. If the action is denied or fails, the drive does not discharge and may continue to accumulate. Governance and action outcomes feed back into the source of motivation itself.

That creates a closed loop between motivation, planning, action, and governance, rather than a one-way pipeline from prompt to tool call.

This is the part that became most interesting during implementation. The Id does not decide what to do. The Ego does not generate the need. The Superego does not generate plans. Each module has a narrower role, and the interaction between them becomes easier to inspect, test, and reason about.

What exists today

This is not just a conceptual sketch. NeoPsyke already has a strong architectural base implemented in a full agent runtime with goals, memory, tools, integrations, and action control. It also has a powerful validation harness, including full record, replay, and divergence capabilities, that gives the project a solid development foundation. Even so, it remains a work in progress, with meaningful gaps in stability, evaluation, and breadth of action coverage.

What is already there:

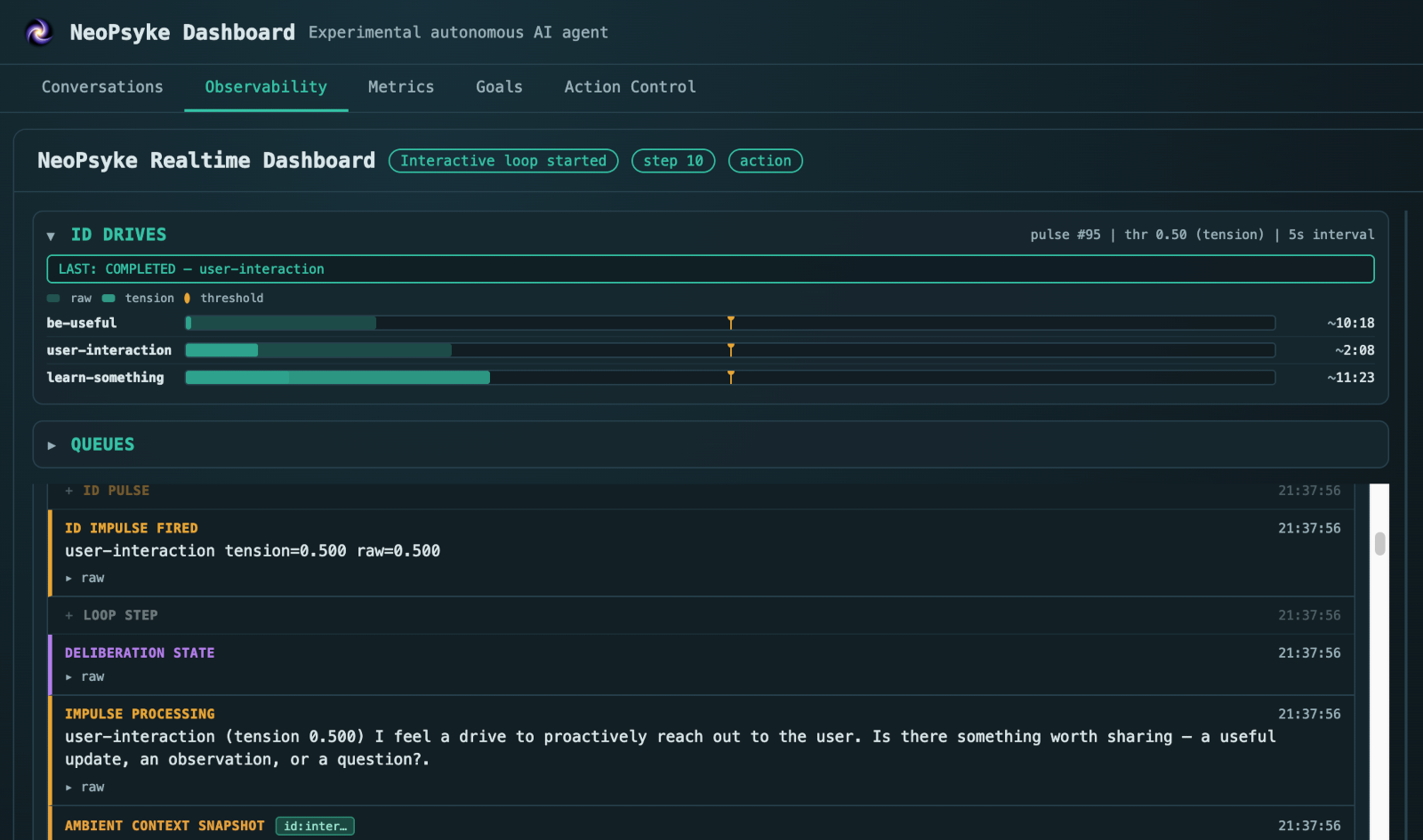

- Internal motivation as a first-class module. The Id maintains bounded internal drives such as being useful, learning something, or seeking interaction. These accumulate pressure over time and can generate autonomous impulses when the system is idle.

- Explicit source separation. User messages, internal drives, tool feedback, goals, and timers all enter the system as typed stimuli with different origins and trust semantics. Internal impulses are not allowed to impersonate user requests.

- Structural governance. The Superego is not a moderation layer bolted on afterward. Governance is a first-class part of the loop, with deterministic policy checks and review before execution.

- An explicit action lifecycle. Instead of collapsing actions into a simple plan → execute pipeline, NeoPsyke moves them through observe → prepare → stage → authorize → commit → record. Higher-impact actions can be reviewed and approved through the dashboard before execution.

- Durable goals and memory. The runtime includes recurring and scheduled goals, short-term context, episodic recall, and long-term semantic memory.

- A practical agent runtime. The project already includes web search and browsing, a plugin-based action system, external integrations, and a local web dashboard for chat, observability, and action control.

What it is not

NeoPsyke is not trying to implement human psychology. It does not claim consciousness or AGI. It is not a chat interface over an LLM, a generic tool-calling wrapper, or a workflow engine with cognitive terminology layered on top.

It is an experiment in a specific architectural direction: can a familiar mental model be converted into a clean software architecture for autonomous agent behavior? Can internal needs produce autonomous impulses? Can the agent clearly distinguish those impulses from user requests? Can generic impulse prompts give rise to emergent behavior? Can a governance layer explicitly approve or deny them? And can those decisions change future motivational dynamics?

That is a narrower ambition than many people will initially project onto a Freudian framing, but it is also a more concrete and testable one.

Just as importantly, the goal is not a minimal theoretical demonstration. The architecture is being built as a genuinely useful agent that can do real work, because otherwise the core mechanism could be explored in a much smaller and simpler project.

Context and prior work

This project does not emerge from a blank slate. Modular and psychologically inspired architectures have substantial prior art, and more recent LLM-based work has also explored layered internal roles, motivation, and governance. NeoPsyke is not presented as the first modular cognitive architecture, nor as the first attempt to draw on psychological framing in AI. The narrower ambition here is the specific control loop: internal motivational pressure, explicit separation between internal impulses and user requests, governance over internally generated proposals, and feedback from outcomes back into future motivational state.

Selected context:

- The Society of Mind, Marvin Minsky

- PSI: A Computational Architecture of Cognition, Motivation, and Emotion, Dörner and Güss

- CogAff archive, Aaron Sloman

- MotiveBench: How Far Are We From Human-Like Motivational Reasoning in Large Language Models?, Jiang, Zhu, and Yu

- Modeling Layered Consciousness with Multi-Agent Large Language Models, Kim et al.

Why it feels different in practice

Internal motivation is explicit rather than incidental. In many agent systems, proactivity is added through schedules, triggers, or external orchestration. Here, motivation is part of the runtime: the agent is driven by generic internal prompts rather than narrowly predefined tasks, so it has to decide for itself what deserves attention and what kind of work to pursue.

That comes with real tradeoffs. A system driven by open-ended internal prompts is less predictable than one built around fixed workflows, and it can spend tokens exploring paths that do not lead to useful work. But that exploratory overhead is part of the experiment, not just a failure mode.

Governance is structural, not decorative. The system carries provenance and trust metadata through the pipeline, narrows the action surface before execution, and records authorization decisions as durable artifacts rather than conversational state.

Part of that judgment is deterministic, and part of it is contextual and model-dependent. That creates its own challenge: the policy layer has to be tuned carefully enough that the models do not over-generalize and block everything, or become too permissive and approve too much. That tuning problem is also part of the experiment.

The modules stay narrow. The Id generates pressure. The Ego mediates and plans. The Superego governs. This separation does not solve everything, but it makes the system easier to inspect and harder to treat as a black box.

Current limitations

The architecture is real and implemented, and the project already has a solid base, but it should still be evaluated as an experimental system rather than a mature general-purpose agent. The limitations should be stated plainly.

- The goal subsystem works in basic scenarios but remains unstable and needs much broader testing.

- The dashboard is intended for local, single-owner testing and debugging. It is not designed for multi-user or multi-tenant deployment.

- Prompt-injection defense is heuristic, not a full sandbox.

- Plugins run in-process as trusted code. Third-party isolation is not implemented yet.

- Memory retrieval and memory consolidation still need tuning, especially around over-filtering and LLM-dependent judgment.

- The evaluation suite is still limited in breadth. The Freud validation pipeline exists, but it still needs broader scenario coverage and stronger empirical validation.

- Prompt quality, planning quality, and governance quality still depend heavily on the quality of the underlying models.

- The current Id dynamics need much more experimentation and still leave substantial room for further exploration.

- The current set of actions and tools is still too limited for the agent to be broadly useful. Additional actions and tools will be added once the Ego loop and goal system are stable enough. Expanding the action surface too early would make the system harder to control safely.

Some omissions are deliberate. For example, NeoPsyke does not currently use sub-agents, not because delegation is uninteresting, but because trust propagation, authority boundaries, and containment need a deliberate security architecture behind them first.

Those are not footnotes. They are part of the current state of the project.

Why Kotlin

Most AI agent projects default to Python. This one uses Kotlin, and the choice is deliberate.

Type safety and the compiler give coding agents a tight feedback loop: errors are caught at compile time, not at runtime. Kotlin's sealed hierarchies are a natural fit for modeling cognitive types and action/state transitions. Coroutines also fit an architecture where stimuli, drives, goals, LLM calls, integrations, and instrumentation all operate concurrently.

Coding agents are also lowering the barrier to working in non-mainstream languages, and strongly typed code can actually make unfamiliar systems easier for them to understand and modify.

More pragmatically, I have deeper experience in Kotlin than in production-grade Python, and trying to build a system like this in well-architected Python was costing more time than the language choice was worth. The architecture mattered more than the ecosystem default.

Why open source it now

Because the architecture is already real enough to inspect, run, criticize, and improve. The validation harness is strong, and the project is now structured well enough to make outside contribution practical.

The right next step is not to overstate what has been built. It is to make the implementation public, keep the framing precise, and let the project be evaluated on the mechanism it actually implements.

The immediate priorities are stronger empirical evaluation, broader scenario coverage, more testing and tuning around needs, goals, memory, and continued hardening of the governance model. If the mechanism holds up, the architectural case becomes stronger. If it does not, the framing should be narrowed.

Open source is the right environment for that process.

Try it or follow along

NeoPsyke is Apache 2.0 licensed. If you want to inspect the code, run the agent locally, or challenge the design, start here:

- GitHub repository: github.com/neopsyke/NeoPsyke

- Setup and usage: see the Getting Started guide or the repository README

- Issues and feedback: open an issue

The current release is best understood as a serious prototype: a working autonomous agent architecture with a clear point of view, real strengths, and real limitations.

NeoPsyke is built by Victor Garcia Toral. This post was written with AI assistance.